OpenGVL: Benchmarking Visual Temporal Progress for Data Curation

Published in CoRL 2025 workshop, 2025

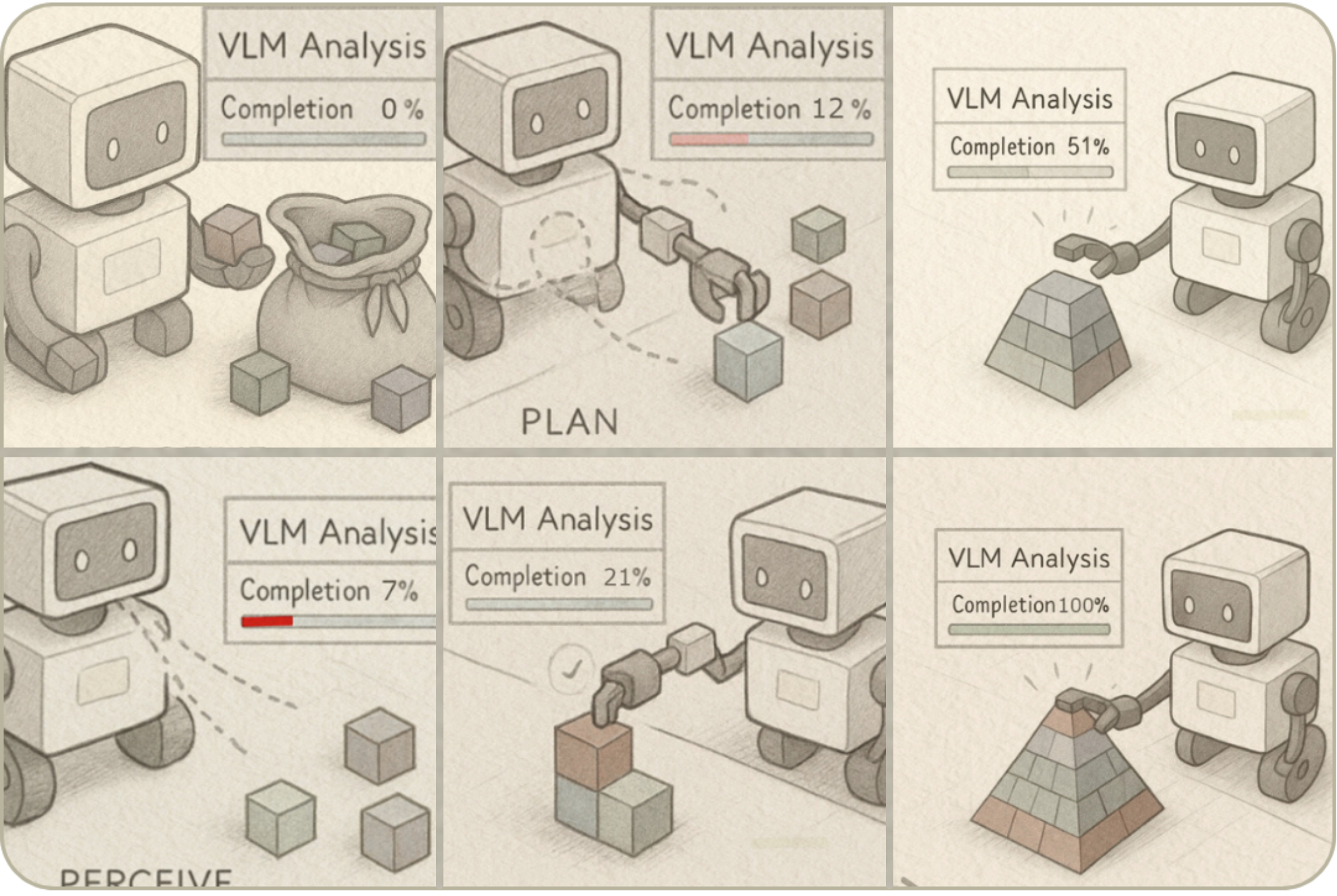

OpenGVL evaluates how vision-language models estimate temporal progress in robotics videos. It supports automated dataset curation by predicting task completion from video frames.

The benchmark uses Value-Order Correlation (VOC), a rank correlation between predicted progress and true time order. It includes shared prompts, data loaders, configs, and hidden tasks for contamination control.

Links:

Recommended citation: Budzianowski, P., Wiśnios, E., Tyrolski, M., Góral, G., Kulakov, I., Petrenko, V., & Walas, K. (2025). OpenGVL--Benchmarking Visual Temporal Progress for Data Curation. arXiv preprint arXiv:2509.17321.

Download Paper | Download Slides